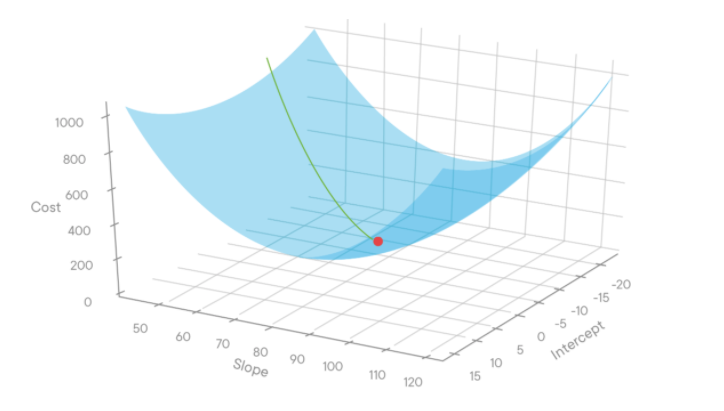

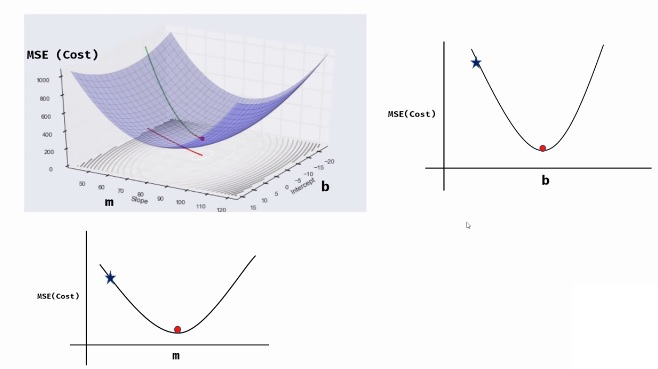

Machine Learning Tutorial Python 4 Gradient Descent And In this tutorial, we are covering few important concepts in machine learning such as cost function, gradient descent, learning rate and mean squared error. w. Gradient descent is an algorithm which finds the best fit line for the given dataset. if we plot a 3d graph for some value for m (slope), b (intercept), and cost function (mse), it will be as shown in the below figure. source: miro.medium . in the above figure, intercept is b, slope is m and cost is mse.

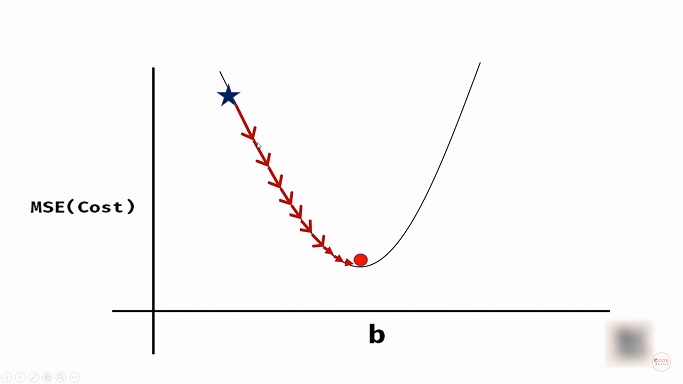

Gradient Descent And Cost Function In Python Machine Learning To implement a gradient descent algorithm we need to follow 4 steps: randomly initialize the bias and the weight theta. calculate predicted value of y that is y given the bias and the weight. calculate the cost function from predicted and actual values of y. calculate gradient and the weights. To find the w w at which this function attains a minimum, gradient descent uses the following steps: choose an initial random value of w w. choose the number of maximum iterations t. choose a value for the learning rate η ∈ [a,b] η ∈ [a, b] repeat following two steps until f f does not change or iterations exceed t. Gradient descent updates the parameters iteratively during the learning process by calculating the gradient of the cost function with respect to the parameters. the parameters are updated in the opposite direction of the gradient of the cost function, which means that if the gradient is positive, the parameters will be decreased and vice versa. 4 you see that the cost function giving you some value that you would like to reduce. 5 using gradient descend you reduce the values of thetas by magnitude alpha. 6 with new set of values of thetas, you calculate cost again. 7 you keep repeating step 5 and step 6 one after the other until you reach minimum value of cost function.

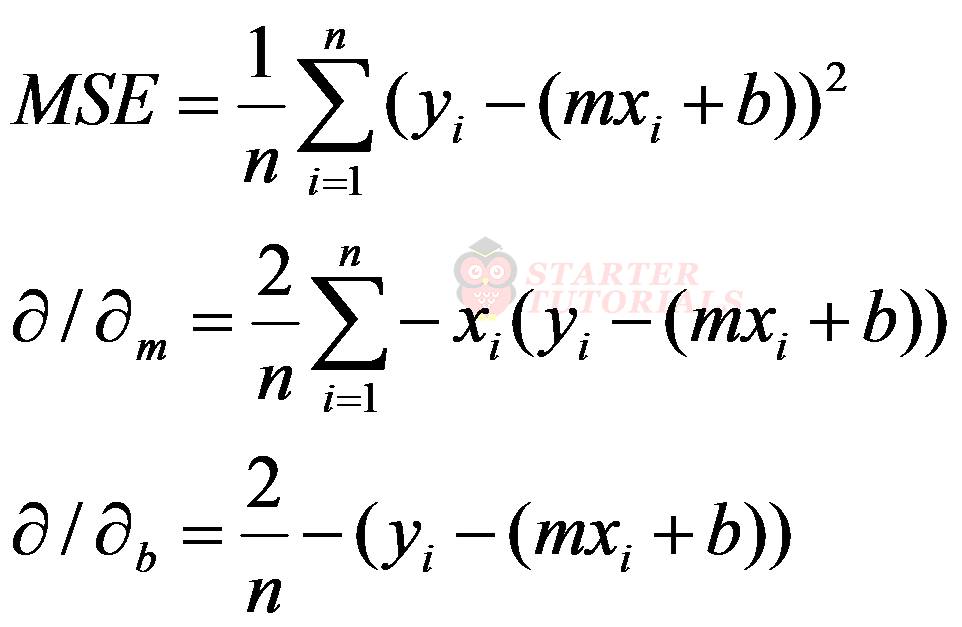

Gradient Descent And Cost Function In Python Machine Learning Gradient descent updates the parameters iteratively during the learning process by calculating the gradient of the cost function with respect to the parameters. the parameters are updated in the opposite direction of the gradient of the cost function, which means that if the gradient is positive, the parameters will be decreased and vice versa. 4 you see that the cost function giving you some value that you would like to reduce. 5 using gradient descend you reduce the values of thetas by magnitude alpha. 6 with new set of values of thetas, you calculate cost again. 7 you keep repeating step 5 and step 6 one after the other until you reach minimum value of cost function. Gradient descent. gradient descent is an optimization algorithm used to find the values of parameters (coefficients) of a function (f) that minimizes a cost function (cost). gradient descent is best used when the parameters cannot be calculated analytically (e.g. using linear algebra) and must be searched for by an optimization algorithm. To implement gradient descent, we need to compute the gradient of the cost function with respect to the parameters w and b. dw = 1 n * 2 * sum((y pred y true) * x) db = 1 n * 2 * sum(y pred y true) next, we can update the parameters using the gradient and the learning rate alpha. w = w alpha * dw.

Gradient Descent And Cost Function In Python Machine Learning Gradient descent. gradient descent is an optimization algorithm used to find the values of parameters (coefficients) of a function (f) that minimizes a cost function (cost). gradient descent is best used when the parameters cannot be calculated analytically (e.g. using linear algebra) and must be searched for by an optimization algorithm. To implement gradient descent, we need to compute the gradient of the cost function with respect to the parameters w and b. dw = 1 n * 2 * sum((y pred y true) * x) db = 1 n * 2 * sum(y pred y true) next, we can update the parameters using the gradient and the learning rate alpha. w = w alpha * dw.

Solution Machine Learning Python 4 Gradient Descent And Costо

Gradient Descent And Cost Function In Python Machine Learning