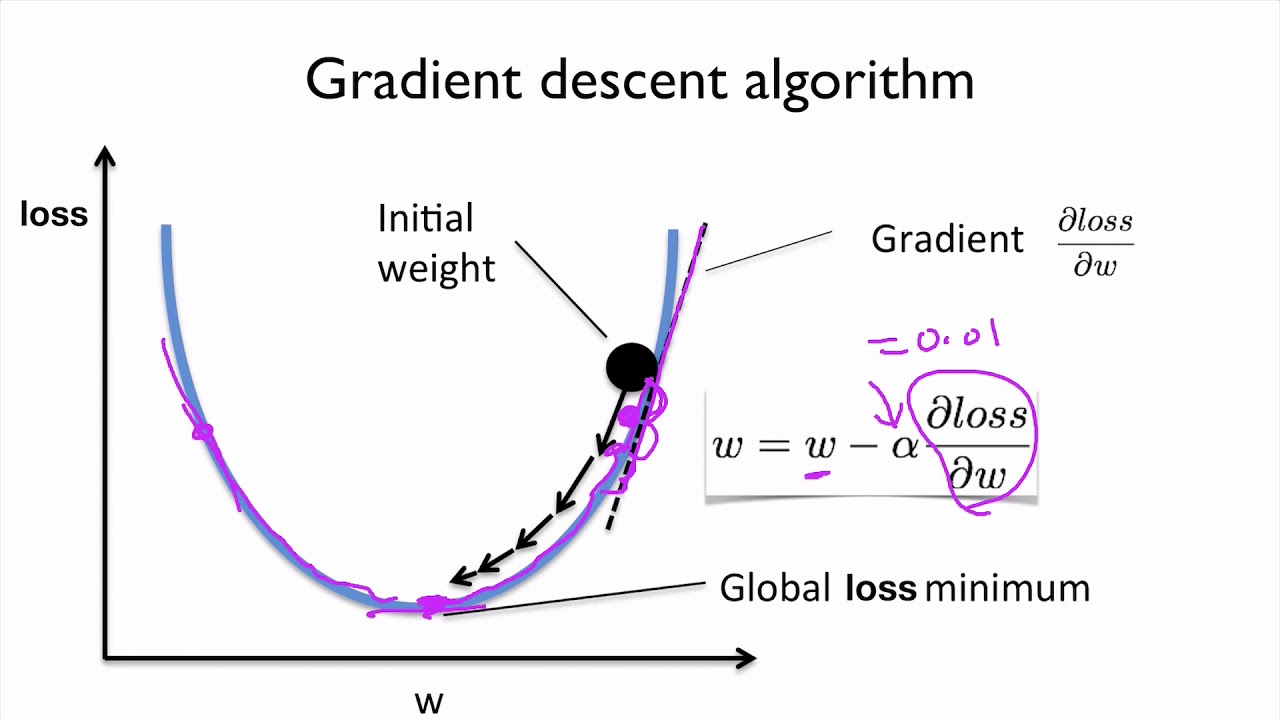

Linear Regression From Scratch Pt2 The Gradient Descent Algorithm By Image source: github. variants of gradient descent. there are generally three(3) variants of the gradient descent algorithm; batch gradient descent. 17. in this tutorial you can learn how the gradient descent algorithm works and implement it from scratch in python. first we look at what linear regression is, then we define the loss function. we learn how the gradient descent algorithm works and finally we will implement it on a given data set and make predictions.

Linear Regression From Scratch Pt2 The Gradient Descent Algorithm By This is a comprehensive guide to understanding gradient descent. we'll cover the entire process from scratch, providing an end to end view. plus, witness a v. Deriving gradient descent. although being first suggested in 1847, gradient descent is still one of the most common optimization algorithms in machine learning the main idea is to find the local minimum of a differentiable function by iteratively taking small steps in the opposite direction of the gradient. Steps required in gradient descent algorithm. step 1 we first initialize the parameters of the model randomly. step 2 compute the gradient of the cost function with respect to each parameter. it involves making partial differentiation of cost function with respect to the parameters. In the above code the line x = np.hstack((np.ones((x.shape[0],1)), x)) adds an extra column of ones to the beginning of x in order to allow matrix multiplication as required. after this we initialize our theta vector with zeros. you can also initialize it with some small random values.

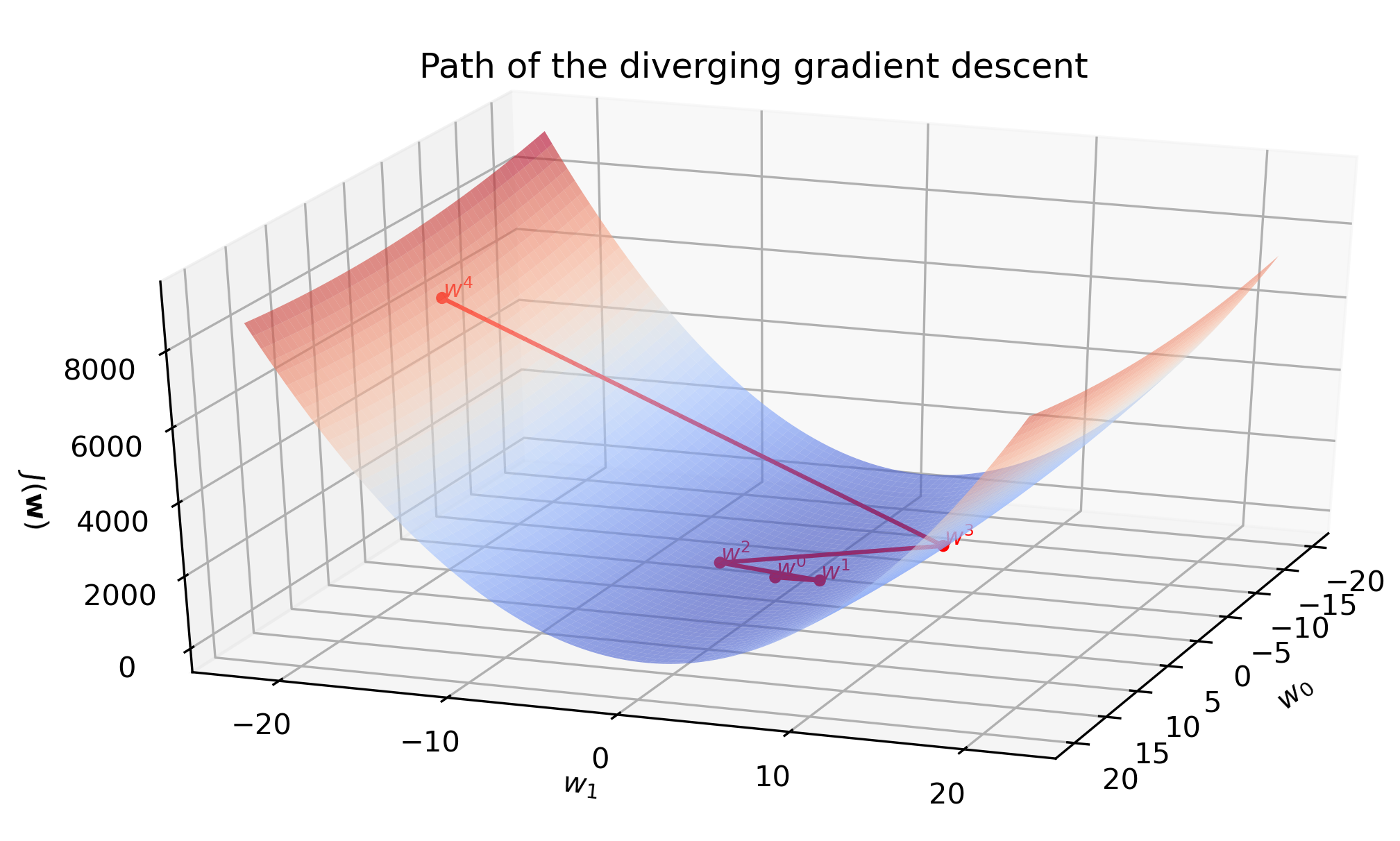

Gradient Descent With Linear Regression From Scratch In Python Steps required in gradient descent algorithm. step 1 we first initialize the parameters of the model randomly. step 2 compute the gradient of the cost function with respect to each parameter. it involves making partial differentiation of cost function with respect to the parameters. In the above code the line x = np.hstack((np.ones((x.shape[0],1)), x)) adds an extra column of ones to the beginning of x in order to allow matrix multiplication as required. after this we initialize our theta vector with zeros. you can also initialize it with some small random values. 1) linear regression from scratch using gradient descent. firstly, let’s have a look at the fit method in the linearreg class. fitting. firstly, we initialize weights and biases as zeros. then, we start the loop for the given epoch (iteration) number. inside the loop, we generate predictions in the first step. The gradient descent algorithm requires a target function that is being optimized and the derivative function for the target function. the target function f () returns a score for a given set of inputs, and the derivative function f' () gives the derivative of the target function for a given set of inputs. objective function: calculates a score.

Linear Regression From Scratch Using Gradient Descent Part 1 The 1) linear regression from scratch using gradient descent. firstly, let’s have a look at the fit method in the linearreg class. fitting. firstly, we initialize weights and biases as zeros. then, we start the loop for the given epoch (iteration) number. inside the loop, we generate predictions in the first step. The gradient descent algorithm requires a target function that is being optimized and the derivative function for the target function. the target function f () returns a score for a given set of inputs, and the derivative function f' () gives the derivative of the target function for a given set of inputs. objective function: calculates a score.

Linear Regression From Scratch Pt2 The Gradient Descent Algorithm By

Linear Regression From Scratch Pt2 The Gradient Descent Algorithm By