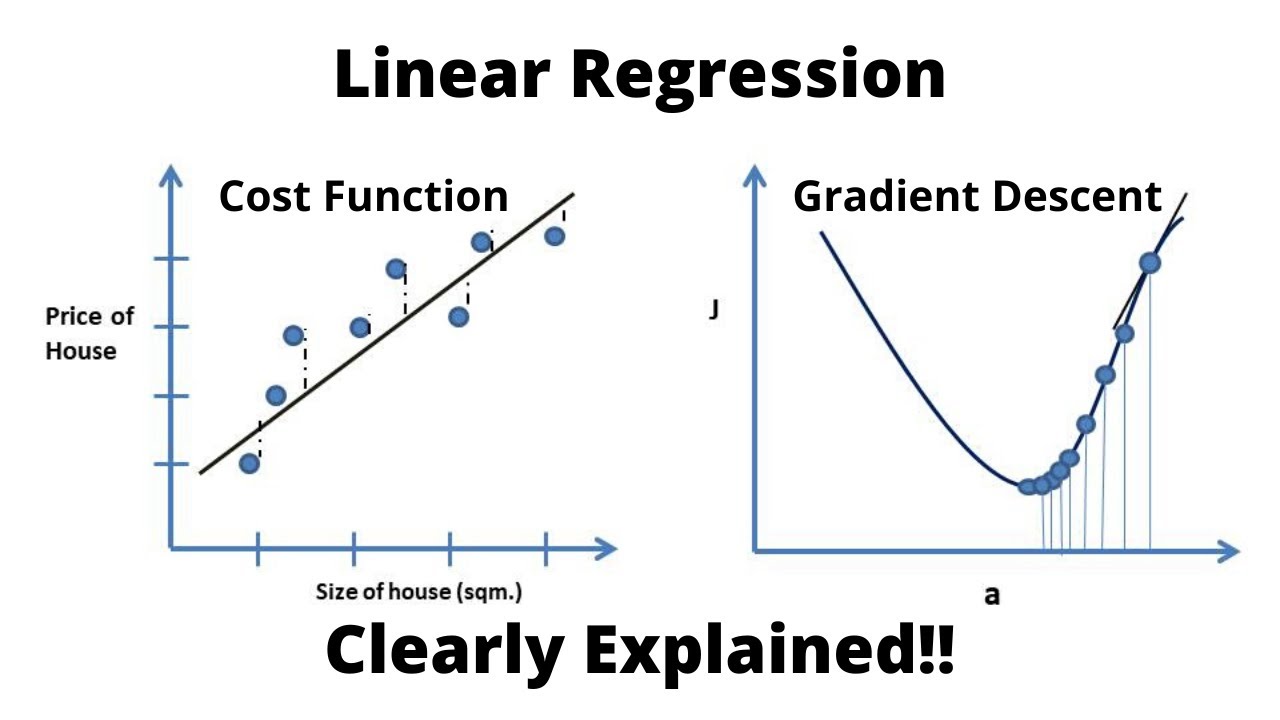

Linear Regression Cost Function And Gradient Descent Algorith This can be solved by an algorithm called gradient descent which will find the local minima that is the best value for c1 and c2 such that the cost function is minimum. if the cost function is. Gradient descent is one of the most famous techniques in machine learning and used for training all sorts of neural networks. but gradient descent can not only be used to train neural networks, but many more machine learning models. in particular, gradient descent can be used to train a linear regression model! if you are curious as to how this is possible, or if you want to approach gradient.

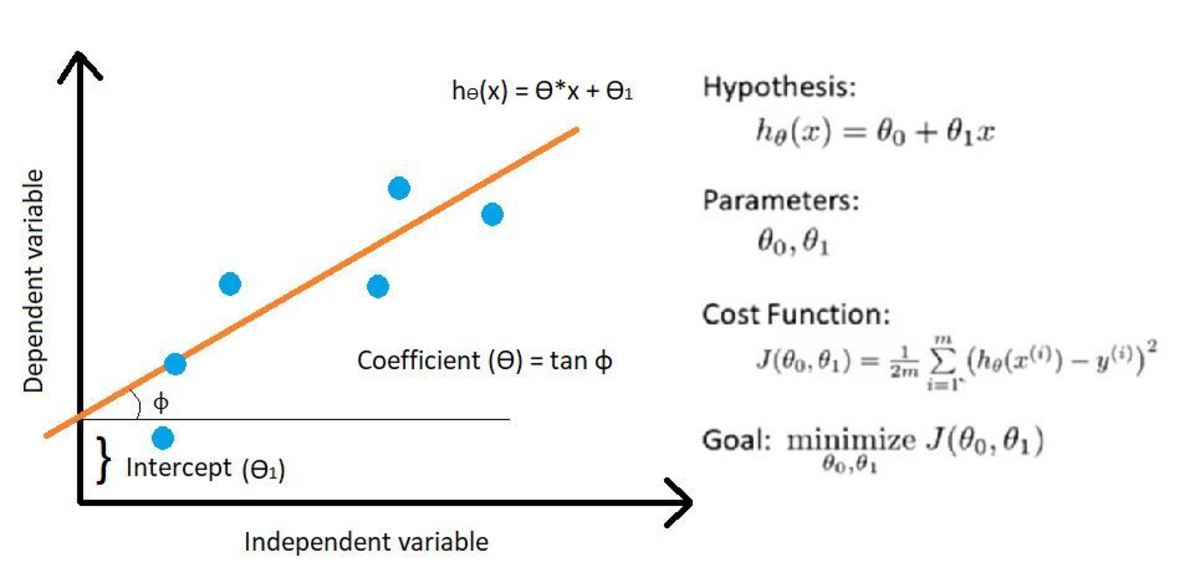

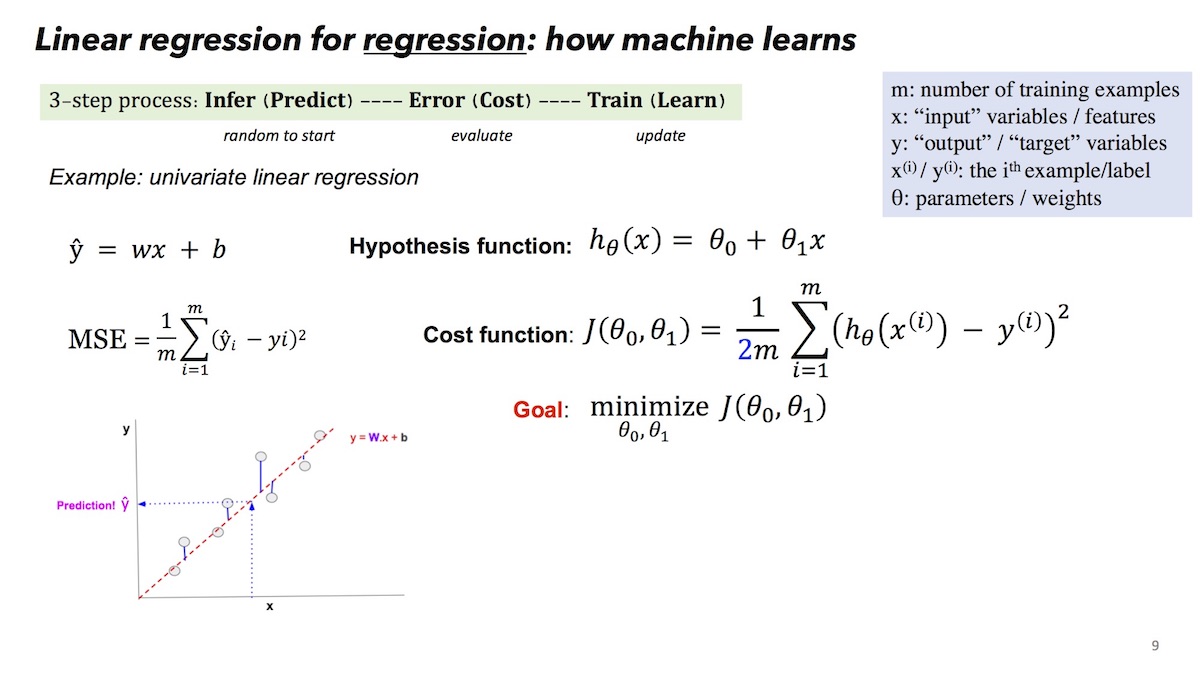

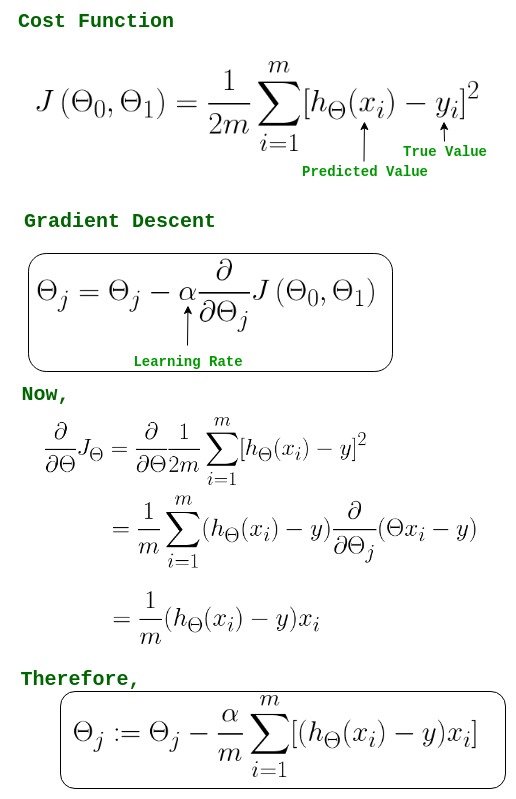

Understanding Gradient Descent Optimization Algorithm Turbofuture Gradient descent is a tool to arrive at the line of best fit. before we dig into gradient descent, let’s first look at another way of computing the line of best fit. statistics way of computing line of best fit: a line can be represented by the formula: y = mx b. the formula for slope m of the regression line is:. 3 you calculate the cost using cost function, which is the distance between what you drew and original data points. 4 you see that the cost function giving you some value that you would like to reduce. 5 using gradient descend you reduce the values of thetas by magnitude alpha. 6 with new set of values of thetas, you calculate cost again. What is gradient descent. gradient descent is an iterative optimization algorithm that tries to find the optimum value (minimum maximum) of an objective function. it is one of the most used optimization techniques in machine learning projects for updating the parameters of a model in order to minimize a cost function. Gradient descent is a method for finding the minimum of a function of multiple variables. so we can use gradient descent as a tool to minimize our cost function. suppose we have a function with n variables, then the gradient is the length n vector that defines the direction in which the cost is increasing most rapidly.

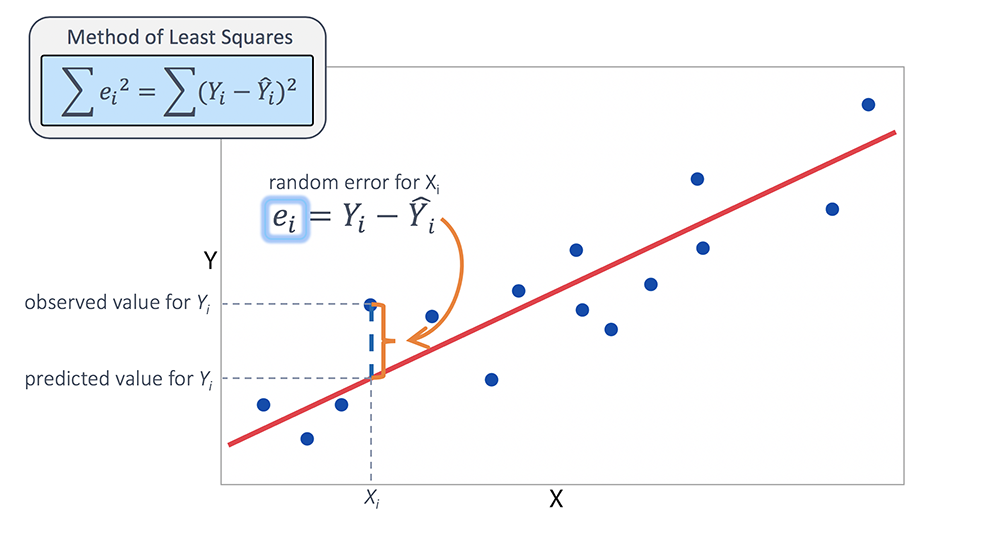

Understanding Cost Function And Gradient Descent What is gradient descent. gradient descent is an iterative optimization algorithm that tries to find the optimum value (minimum maximum) of an objective function. it is one of the most used optimization techniques in machine learning projects for updating the parameters of a model in order to minimize a cost function. Gradient descent is a method for finding the minimum of a function of multiple variables. so we can use gradient descent as a tool to minimize our cost function. suppose we have a function with n variables, then the gradient is the length n vector that defines the direction in which the cost is increasing most rapidly. Linear regression does provide a useful exercise for learning stochastic gradient descent which is an important algorithm used for minimizing cost functions by machine learning algorithms. as stated above, our linear regression model is defined as follows: y = b0 b1 * x. Concept of gradient descent in linear regression. gradient descent is an iterative optimization algorithm used to minimize the cost function in linear regression. the goal is to find the optimal values for the model parameters (weights) that result in the lowest possible cost.

Gradient Descent In Linear Regression Geeksforgeeks Linear regression does provide a useful exercise for learning stochastic gradient descent which is an important algorithm used for minimizing cost functions by machine learning algorithms. as stated above, our linear regression model is defined as follows: y = b0 b1 * x. Concept of gradient descent in linear regression. gradient descent is an iterative optimization algorithm used to minimize the cost function in linear regression. the goal is to find the optimal values for the model parameters (weights) that result in the lowest possible cost.

Gradient Descent In Linear Regression Introduction To Gradient Desc

A Beginners Guide To Gradient Descent Algorithm For Data Scientists